5 Myths About Hardware Wallets

Hardware wallets are the strongest single-device protection available for self-custody crypto. They are also surrounded by a remarkable amount of marketing-driven mythology — secure enclaves, air-gapped protocols, attestation, and so on. Most of it is either subtly misleading or outright wrong.

This article runs through five of the most common myths and what's actually true. Some of these will be uncomfortable for hardware-wallet companies to read. We're a hardware-wallet company. We're saying it anyway because the only durable case for hardware self-custody is one that survives an honest look at the limits.

Myth 1 — "A hardware wallet makes you immune to hacking"

A hardware wallet protects your private keys from leaking. It doesn't protect you from authorizing transactions you shouldn't have.

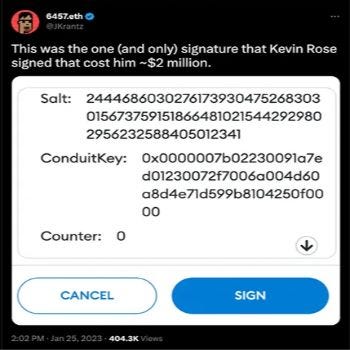

The classic example: in 2023, Kevin Rose lost $1.1M of NFTs in a phishing attack despite using a hardware wallet (Ledger) plus MetaMask. The attacker tricked him into signing an off-chain message that, when interpreted, granted approval over his NFT collection. The hardware wallet did exactly what hardware wallets do — it asked him to confirm the signing operation, and he did. The keys never left the device. The funds left anyway.

This is "blind signing" — when the device shows you something the user can't meaningfully evaluate (a hex blob, an EIP-712 typed-data structure, a contract call that looks identical whether benign or malicious). You can have the world's most secure hardware wallet and still get drained, if the wallet is happy to sign whatever the host asks it to sign.

What you actually have to do:

- Treat every signing prompt as suspicious until you understand what's being signed. If a contract address, a function name, or a magnitude looks unfamiliar, abort.

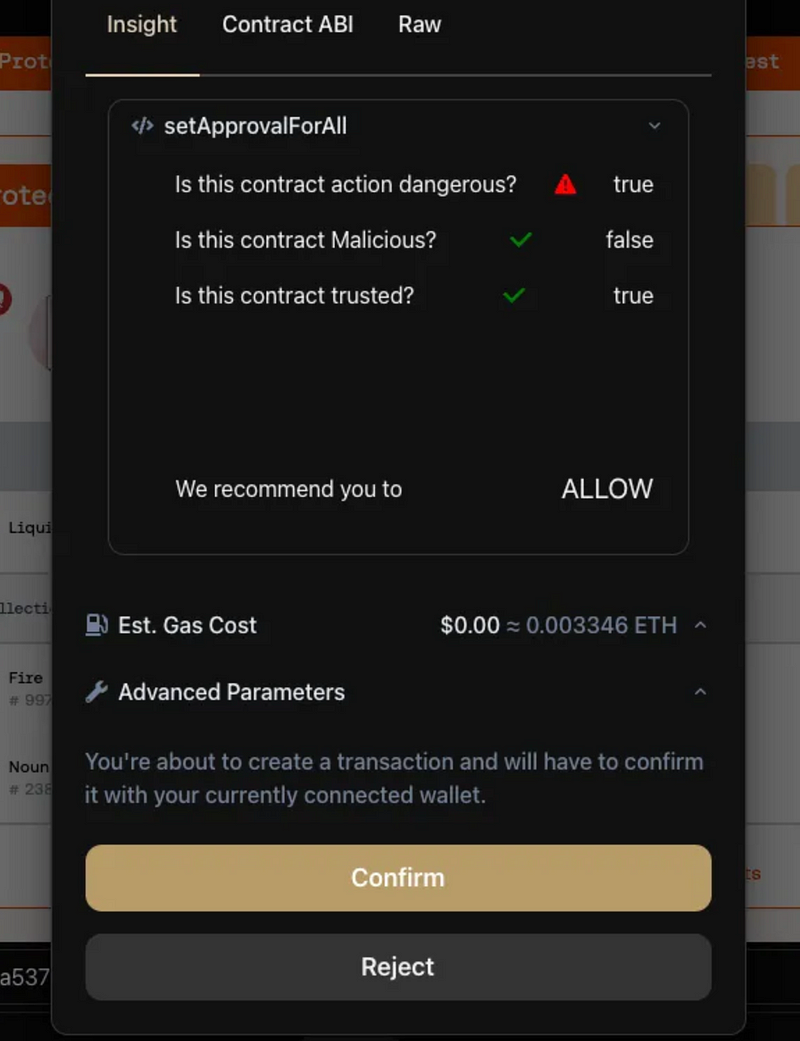

- Use transaction-insight tools that simulate the contract interaction and flag malicious or dangerous patterns before the signing prompt reaches your device. Tools like Harpie.io, Wallet Guard, and Pocket Universe maintain blacklists of known scam contracts and run preflight simulations:

- "Is this contract dangerous?" — does the simulation show your tokens being transferred or approvals being granted you didn't expect?

- "Is this contract malicious?" — is the contract on a known scam list?

- "Is this contract trusted?" — is it a long-standing, audited contract many other people have used?

- For routine flows, prefer chain-native signing (e.g. KeepKey + Vault Desktop's THORChain swap UX, or Sparrow for Bitcoin) over generic Web3 signing in browser extensions. Less surface for the attacker to exploit.

The hardware wallet protects your keys. You have to protect your authorization decisions.

Myth 2 — "Secure enclaves make hardware wallets meaningfully more secure"

The phrase "secure enclave" is doing a lot of marketing work. The reality is more complicated than the marketing suggests.

A few definitions first:

- Secure Enclave — a coprocessor on a device that handles cryptographic operations in isolation. Famous example: Apple's Secure Enclave on iPhone.

- Secure Element — a tamper-resistant chip designed to store and process sensitive data. Used in chip-and-PIN credit cards and in some hardware wallets (most prominently Ledger).

What both of these provide: physical resistance to chip-level attacks (laser fault injection, side-channel power analysis, etc.). What they don't provide: a guarantee that the firmware running on top of them isn't malicious.

Here's the part the marketing skips: a secure element on its own cannot sign a transaction. The element holds key material and exposes a sign-this-data API. Whatever firmware is talking to that API gets to decide what data gets signed. If that firmware is closed-source and unauditable, the security of the system reduces to "trust the manufacturer."

Ledger's firmware is closed source. Ledger has stated they cannot fully open-source it because of contractual obligations with the secure-element chip vendor. They have committed to incrementally open more of it over time, which is a step in the right direction — but until full open-sourcing happens, their firmware has not been peer-reviewed by the security research community in the same way KeepKey, ColdCard, Trezor, and BitBox firmware have been.

The community-audit track record matters. For example:

CVE-2019-14353: A side-channel attack on row-based OLED displays where an attacker could partially recover screen contents by measuring power consumption. Reported by Christian Reitter. Fixed across the open-source hardware wallet stack — KeepKey, Trezor, and others — by ensuring every row of the display contains the same number of illuminated pixels, eliminating the power-draw signal.

Hundreds of issues like this have been found and fixed in open-source hardware-wallet firmware over the last decade. See the List of Hardware Wallet Hacks for a fuller picture. Closed-source firmware on a closed-source enclave is missing the most important defense any security system can have: external audit and continuous public review.

The good news: fully open-source secure elements are coming. Tropic Square is producing the first transparent secure-element chips, and RISC-V architecture is making fully open hardware feasible. Once those land in production wallets, the "we can't open-source it because the chip vendor won't let us" excuse goes away. KeepKey is following this work closely.

Until then, the practical takeaway: a closed-source secure element with closed-source firmware is not a stronger trust contract than open-source firmware on a well-designed microcontroller — it is a weaker one.

Myth 3 — "Air-gapped or QR-code wallets are more secure than USB"

This is one of the most stubbornly repeated myths in the space. The transport layer between the host and the device does not determine the device's security.

Several wallets — Ellipal, SafePal, NGRAVE — market "air-gapped" protocols using QR codes scanned through cameras instead of USB or Bluetooth. The pitch is that USB is an attack surface, so removing it makes you safer.

The pitch ignores how hardware-wallet security actually works. A hardware wallet's security comes from the device's display being a trusted channel between its memory and your eyes — what you see on the screen is exactly what's about to be signed. The transport (USB, Bluetooth, QR) is just the data conduit into and out of the device. The transport is auditable in all cases.

Switching from USB to QR doesn't change:

- Whether the device's firmware is audit-able (the actual security question)

- Whether the device verifies signatures correctly

- Whether the screen shows you the truth about what you're signing

- Whether the device's secure element implementation is correct

It does change:

- The convenience of the device (QR-only is slow)

- The number of QR codes you'd theoretically have to audit per transaction (impractically many — nobody actually does this)

- The marketing copy

If a device's firmware is malicious, the QR codes will simply lie about the transactions before they leave the device. Air-gapping is a marketing reframe of "we removed a feature so we could call ourselves more secure." It is not, in practice, a meaningful security upgrade.

Myth 4 — "Card-style hardware wallets without screens are equally secure"

A growing class of "hardware wallets" — credit-card-form-factor devices that sign by tapping against a phone — have no display.

A device without a display is not a hardware wallet. It's a blind signer. There is no way for the user to verify what's being signed before authorizing it. The user is trusting whatever app on their phone tells them the signing prompt is for. If the phone is compromised — and modern phones are routinely compromised, especially Android devices outside the major OEM update tracks — the wallet signs whatever the malware asks it to.

The whole point of a hardware wallet's screen is that what you see is what gets signed. Remove the screen and the device falls back to "trust the host." At that point you've spent money on a worse version of a software wallet.

Card wallets have legitimate use cases: small amounts, daily-spending, situations where convenience genuinely outweighs the verification gap. They should not be your cold storage. Don't pay hardware-wallet prices for a key isolation chip and call it a hardware wallet.

Myth 5 — "Device attestation prevents tampering"

When a hardware wallet boots, it checks (via a certificate chain rooted at the manufacturer) that its firmware is authentic and signed. This is "attestation." Companies use it to prove "this device wasn't tampered with."

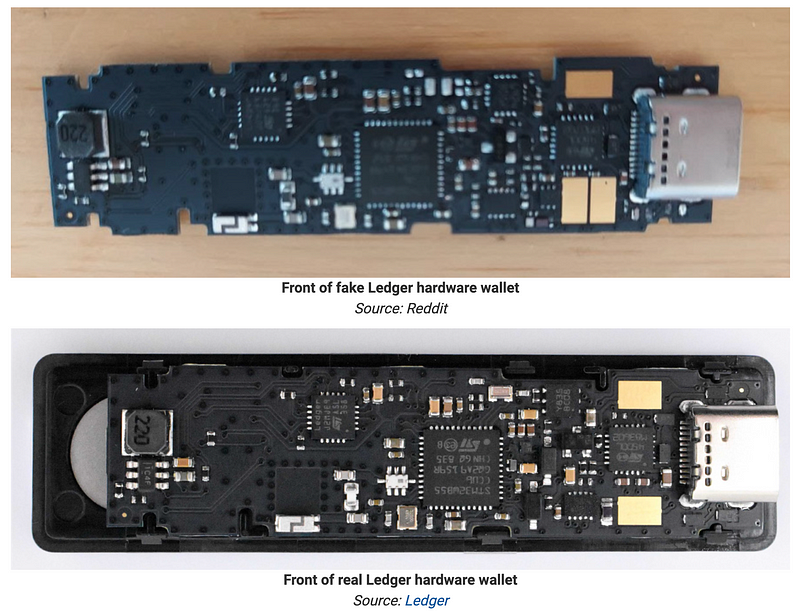

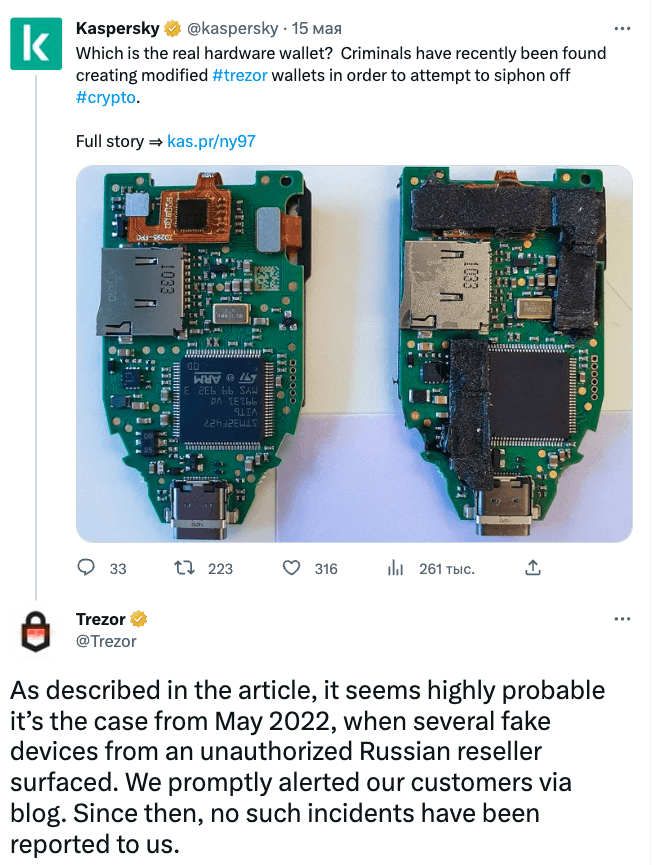

Attestation does not prove the device is untampered. It proves the firmware is authentic. A hardware-modified device with the original firmware still passes attestation just fine.

Real-world examples:

A Ledger device with an extra hardware backdoor inside the case can pass device attestation cleanly. The chip is genuine. The firmware is signed. The added components are invisible to the attestation check.

Trezor has a helpful blog post and a security analysis by Saleem Rashid that cover this in detail. Bottom line: attestation is a useful protection against firmware-level tampering, but it gives a false sense of security about hardware-level supply-chain attacks.

Real defenses against supply-chain modification:

- Tamper-evident packaging that breaks visibly if opened.

- Tamper-resistant casing — KeepKey uses a metal case that is materially harder to open and reseal than a plastic case. Not impossible, but a significantly higher bar.

- Anti-tamper meshes — the GridPlus Lattice1 uses a 3D laser-etched mesh wrapped around the secure components; if the mesh is shorted or the embedded waveform is disturbed, the device bricks. This is the gold-standard defense against physical tampering, and we expect more devices to ship variants in coming generations.

- Multi-vendor multisig — see Multi-Vendor Bitcoin Multisig. The structural answer: don't bet your funds on any single device.

"There is no foolproof way to prevent supply chain attacks at the single-device level."

Treat attestation as a "did the firmware get swapped" check. It is not a "did anyone touch the hardware" check. The latter is, today, an unsolved problem.

Where the actual losses come from

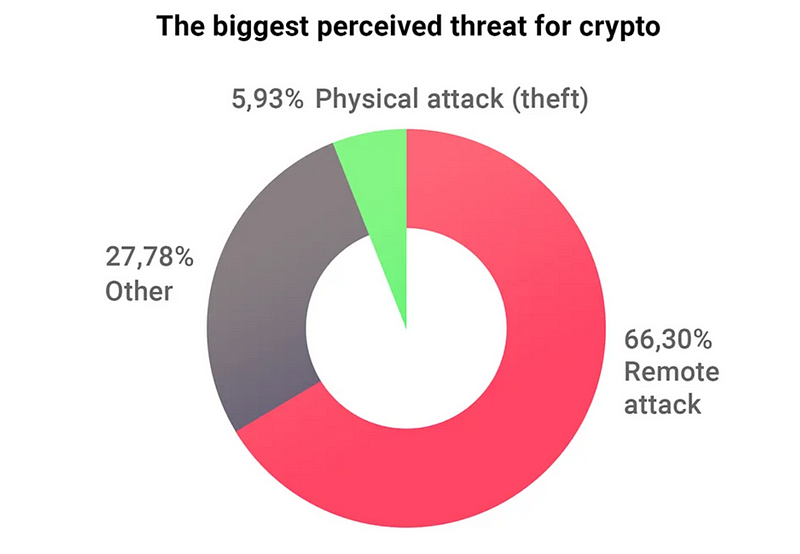

The pie chart that should be on every hardware-wallet marketing page:

The vast majority of hardware-wallet user losses come from remote attacks — phishing, blind signing, mismanagement of keys, malicious dApp approvals — not from the secure-element-level concerns most discussions obsess about.

This is why KeepKey's roadmap emphasizes:

- Transaction insight — making sure the user actually understands what they're signing before the device asks for confirmation.

- Wallet-connect security — extended validation of dApp interactions before they reach the device.

- Multi-chain REST → wallet protocols with built-in safety — replacing the generic Web3 blind-signing surface with chain-native flows where the user sees what they're authorizing in plain language.

The secure-element marketing wars are largely irrelevant to the threats that actually empty wallets.

A note on BIP39 passphrases

The strongest defense against physical attacks on a hardware wallet is the BIP39 passphrase — a "25th word" that combines with your seed to derive a hidden wallet. See BIP39 Passphrase for the full story.

One technical caveat worth knowing: BIP39 passphrase derivation uses PBKDF2 with 2048 iterations — a count chosen at the time of the spec to be feasible on early hardware wallets with limited compute. By 2026 standards, 2048 iterations is low. A motivated attacker with a moderately sized GPU farm and your seed phrase could brute-force a typical 16-character passphrase in weeks to months.

This is fine for most users — the attacker still needs your seed phrase, which they shouldn't have. But if your threat model includes "a sophisticated adversary who has obtained my seed phrase and is trying to crack the passphrase," choose a passphrase with high entropy (random, long, not derivable from anything about you). And know that future BIP-39 derivatives will likely raise the iteration count substantially as hardware-wallet processing power has grown.

Summary

- Hardware wallets do not make you immune to hacking — blind signing remains the biggest unsolved problem in the space.

- Secure enclaves with closed-source firmware are weaker, not stronger, than open-source firmware on auditable hardware. Black boxes cannot be community-reviewed.

- Air-gapped is not more secure than USB. Transport doesn't determine security; firmware does.

- A "hardware wallet" without a screen is not a hardware wallet. It's a blind signer with hardware-wallet pricing.

- Device attestation does not prevent supply-chain hardware tampering. It catches firmware swaps; it does not catch physical modifications.

Where to put your effort: pick a wallet with verifiable open-source firmware, learn what you're actually signing, and use multi-vendor multisig for amounts where single-device failure is unacceptable.

KeepKey is a single-vendor recommendation only for amounts where you're comfortable with single-vendor risk. For everything else, the right answer is multisig. We are explicit about this because — at this stage of the industry — that is the honest answer.

Related

- Verifying KeepKey Firmware — the audit chain we want every wallet to have

- Multi-Vendor Bitcoin Multisig — the structural answer to single-device risk

- Dark Skippy — why closed-source firmware is a present-tense risk

- Hardware Wallets and User Privacy — the related-but-different "what's the companion app doing" story

- BIP39 Passphrase — the strongest single-device defense against physical attacks